Entropy (information theory)

Videos

Page

In information theory, the entropy of a random variable is the average level of "information", "surprise", or "uncertainty" inherent to the variable's possible outcomes. Given a discrete random variable , which takes values in the alphabet and is distributed according to , the entropy is

Two bits of entropy: In the case of two fair coin tosses, the information entropy in bits is the base-2 logarithm of the number of possible outcomes— with two coins there are four possible outcomes, and two bits of entropy. Generally, information entropy is the average amount of information conveyed by an event, when considering all possible outcomes.

Claude Shannon

Videos

Page

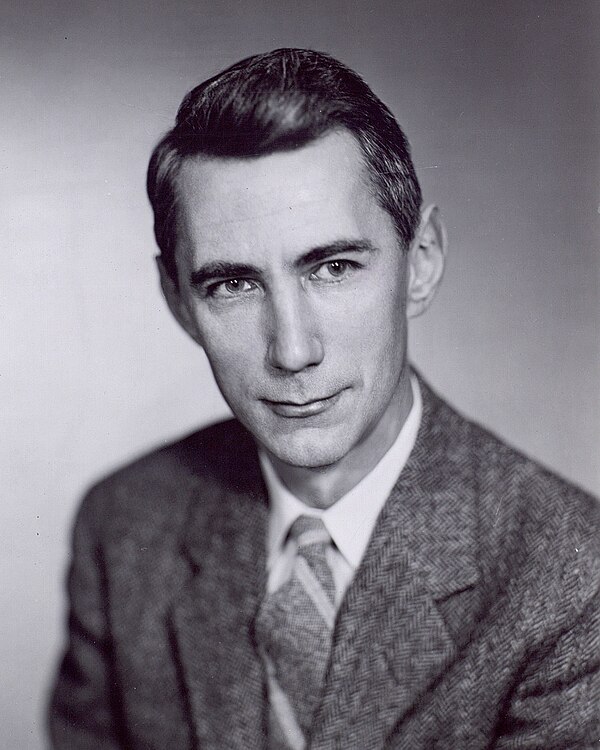

Claude Elwood Shannon was an American mathematician, electrical engineer, computer scientist and cryptographer known as the "father of information theory". He was the first to describe the Boolean gates that are essential to all digital electronic circuits, and he built the first machine learning device, thus founding the field of artificial intelligence. He is credited alongside George Boole for laying the foundations of the Information Age.

Shannon c. 1950s

The Minivac 601, a digital computer trainer designed by Shannon

Statue of Claude Shannon at AT&T Shannon Labs

Theseus Maze in MIT Museum