Sprite (computer graphics)

In computer graphics, a sprite is a two-dimensional bitmap that is integrated into a larger scene, most often in a 2D video game. Originally, the term sprite referred to fixed-sized objects composited together, by hardware, with a background. Use of the term has since become more general.

Tank and rocket sprites from Broforce

Computer graphics deals with generating images and art with the aid of computers. Today, computer graphics is a core technology in digital photography, film, video games, digital art, cell phone and computer displays, and many specialized applications. A great deal of specialized hardware and software has been developed, with the displays of most devices being driven by computer graphics hardware. It is a vast and recently developed area of computer science. The phrase was coined in 1960 by computer graphics researchers Verne Hudson and William Fetter of Boeing. It is often abbreviated as CG, or typically in the context of film as computer generated imagery (CGI). The non-artistic aspects of computer graphics are the subject of computer science research.

A Blender screenshot displaying the 3D test model Suzanne

Spacewar! running on the Computer History Museum's PDP-1

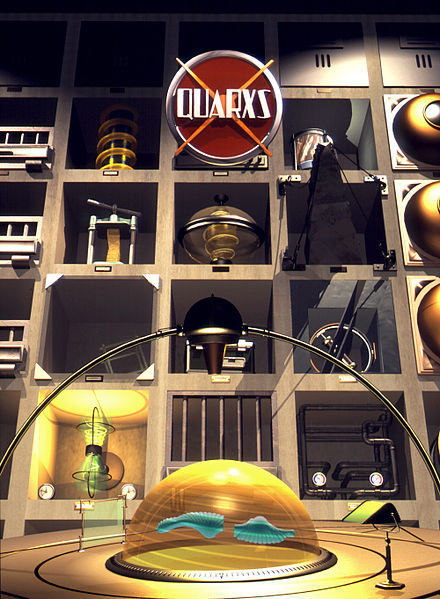

Quarxs, series poster, Maurice Benayoun, François Schuiten, 1992

A screenshot from the videogame Killing Floor, built in Unreal Engine 2. Personal computers and console video games took a great graphical leap forward in the 2000s, becoming able to display graphics in real time computing that had previously only been possible pre-rendered and/or on business-level hardware.