Confirmation bias

Videos

Page

Confirmation bias is the tendency to search for, interpret, favor, and recall information in a way that confirms or supports one's prior beliefs or values. People display this bias when they select information that supports their views, ignoring contrary information, or when they interpret ambiguous evidence as supporting their existing attitudes. The effect is strongest for desired outcomes, for emotionally charged issues, and for deeply entrenched beliefs. Confirmation bias is insuperable for most people, but they can manage it, for example, by education and training in critical thinking skills.

Confirmation bias has been described as an internal "yes man", echoing back a person's beliefs like Charles Dickens's character Uriah Heep.

An MRI scanner allowed researchers to examine how the human brain deals with dissonant information.

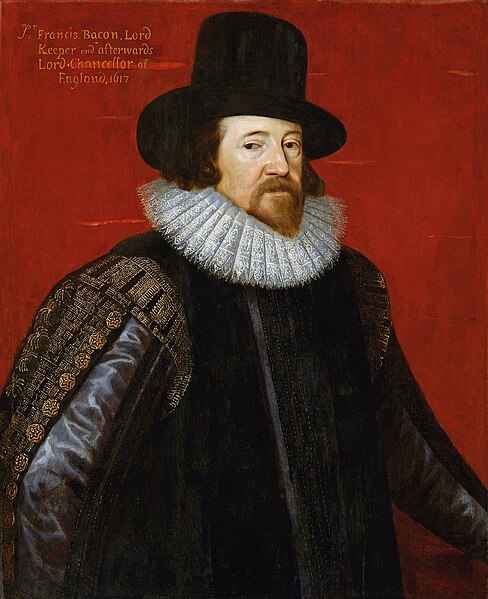

Francis Bacon

Happy events are more likely to be remembered.

Wishful thinking

Videos

Page

Wishful thinking is the formation of beliefs based on what might be pleasing to imagine, rather than on evidence, rationality, or reality. It is a product of resolving conflicts between belief and desire. Methodologies to examine wishful thinking are diverse. Various disciplines and schools of thought examine related mechanisms such as neural circuitry, human cognition and emotion, types of bias, procrastination, motivation, optimism, attention and environment. This concept has been examined as a fallacy. It is related to the concept of wishful seeing.

Illustration from St. Nicholas: an Illustrated Magazine for Young Folks (1884) of a child imagining that a small, toy horse might pull his cart

Focused attention