In information theory, data compression, source coding, or bit-rate reduction is the process of encoding information using fewer bits than the original representation. Any particular compression is either lossy or lossless. Lossless compression reduces bits by identifying and eliminating statistical redundancy. No information is lost in lossless compression. Lossy compression reduces bits by removing unnecessary or less important information. Typically, a device that performs data compression is referred to as an encoder, and one that performs the reversal of the process (decompression) as a decoder.

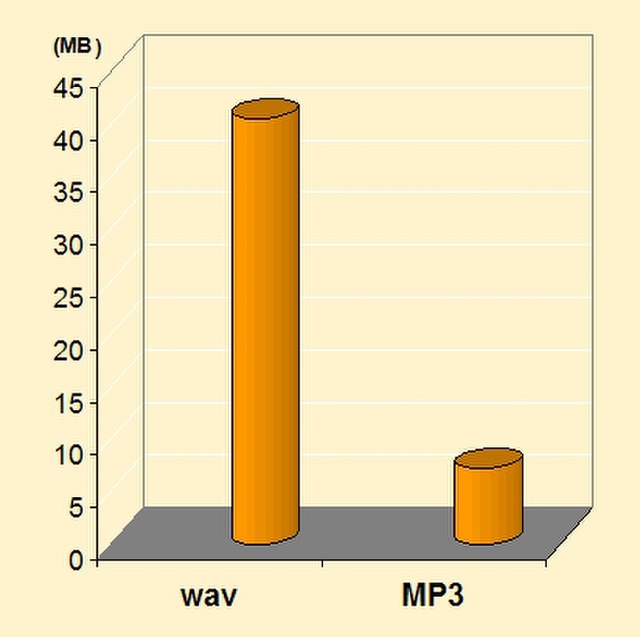

MP3, an example of a lossy file format compared to WAV.

Comparison of spectrograms of audio in an uncompressed format and several lossy formats. The lossy spectrograms show bandlimiting of higher frequencies, a common technique associated with lossy audio compression.

Solidyne 922: The world's first commercial audio bit compression sound card for PC, 1990

Information is an abstract concept that refers to something which has the power to inform. At the most fundamental level, it pertains to the interpretation of that which may be sensed, or their abstractions. Any natural process that is not completely random and any observable pattern in any medium can be said to convey some amount of information. Whereas digital signals and other data use discrete signs to convey information, other phenomena and artifacts such as analogue signals, poems, pictures, music or other sounds, and currents convey information in a more continuous form. Information is not knowledge itself, but the meaning that may be derived from a representation through interpretation.

Galactic (including dark) matter distribution in a cubic section of the Universe

Information embedded in an abstract mathematical object with symmetry symmetry-breaking nucleus

Visual representation of a strange attractor, with converted data of its fractal structure