In the mathematical field of dynamical systems, an attractor is a set of states toward which a system tends to evolve, for a wide variety of starting conditions of the system. System values that get close enough to the attractor values remain close even if slightly disturbed.

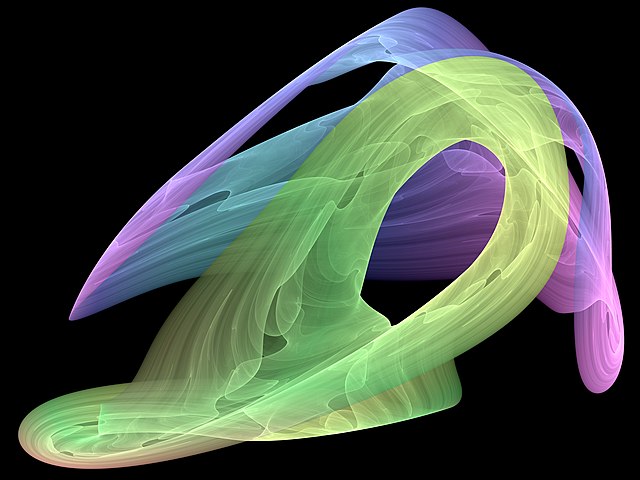

Visual representation of a strange attractor. Another visualization of the same 3D attractor is this video. Code capable of rendering this is available.

In mathematics, a fractal is a geometric shape containing detailed structure at arbitrarily small scales, usually having a fractal dimension strictly exceeding the topological dimension. Many fractals appear similar at various scales, as illustrated in successive magnifications of the Mandelbrot set. This exhibition of similar patterns at increasingly smaller scales is called self-similarity, also known as expanding symmetry or unfolding symmetry; if this replication is exactly the same at every scale, as in the Menger sponge, the shape is called affine self-similar. Fractal geometry lies within the mathematical branch of measure theory.

Mandelbrot set at islands

The Mandelbrot set: its boundary is a fractal curve with Hausdorff dimension 2. (Note that the colored sections of the image are not actually part of the Mandelbrot Set, but rather they are based on how quickly the function that produces it diverges.)

Mandelbrot set with 12 encirclements

2x 120 degrees recursive IFS