Computational fluid dynamics

Computational fluid dynamics (CFD) is a branch of fluid mechanics that uses numerical analysis and data structures to analyze and solve problems that involve fluid flows. Computers are used to perform the calculations required to simulate the free-stream flow of the fluid, and the interaction of the fluid with surfaces defined by boundary conditions. With high-speed supercomputers, better solutions can be achieved, and are often required to solve the largest and most complex problems. Ongoing research yields software that improves the accuracy and speed of complex simulation scenarios such as transonic or turbulent flows. Initial validation of such software is typically performed using experimental apparatus such as wind tunnels. In addition, previously performed analytical or empirical analysis of a particular problem can be used for comparison. A final validation is often performed using full-scale testing, such as flight tests.

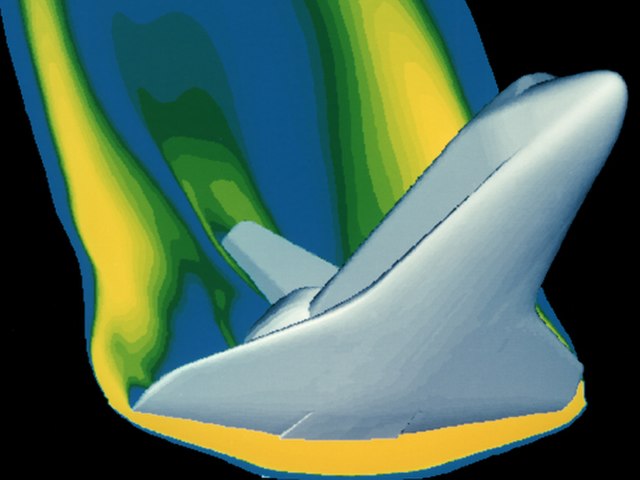

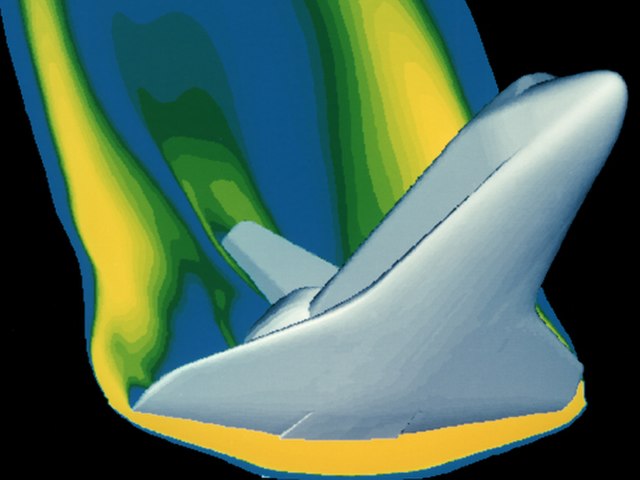

A computer simulation of high velocity air flow around the Space Shuttle during re-entry

A simulation of the Hyper-X scramjet vehicle in operation at Mach-7

Volume rendering of a non-premixed swirl flame as simulated by LES

Simulation of bubble horde using volume of fluid method

In physics, physical chemistry and engineering, fluid dynamics is a subdiscipline of fluid mechanics that describes the flow of fluids—liquids and gases. It has several subdisciplines, including aerodynamics and hydrodynamics. Fluid dynamics has a wide range of applications, including calculating forces and moments on aircraft, determining the mass flow rate of petroleum through pipelines, predicting weather patterns, understanding nebulae in interstellar space and modelling fission weapon detonation.

The transition from laminar to turbulent flow