Digital data, in information theory and information systems, is information represented as a string of discrete symbols, each of which can take on one of only a finite number of values from some alphabet, such as letters or digits. An example is a text document, which consists of a string of alphanumeric characters. The most common form of digital data in modern information systems is binary data, which is represented by a string of binary digits (bits) each of which can have one of two values, either 0 or 1.

Digital clock. The time shown by the digits on the face at any instant is digital data. The actual precise time is analog data.

Electronics is a scientific and engineering discipline that studies and applies the principles of physics to design, create, and operate devices that manipulate electrons and other electrically charged particles. Electronics is a subfield of electrical engineering which uses active devices such as transistors, diodes, and integrated circuits to control and amplify the flow of electric current and to convert it from one form to another, such as from alternating current (AC) to direct current (DC) or from analog signals to digital signals.

Modern surface-mount electronic components on a printed circuit board, with a large integrated circuit at the top

One of the earliest Audion radio receivers, constructed by De Forest in 1914

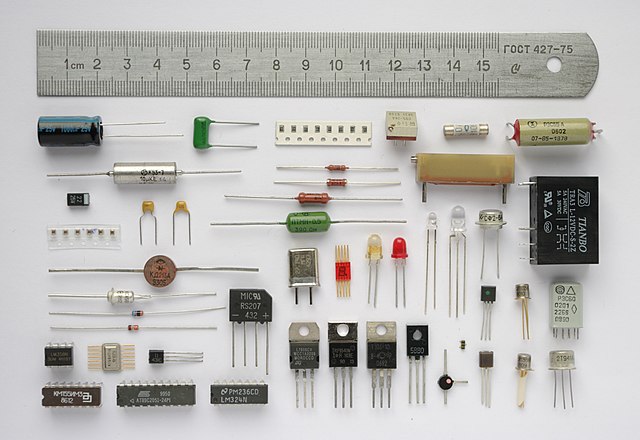

Various electronic components

Through-hole devices mounted on the circuit board of a mid-1980s home computer. Axial-lead devices are at upper left, while blue radial-lead capacitors are at upper right.