Distance

Videos

Page

Distance is a numerical or occasionally qualitative measurement of how far apart objects or points are. In physics or everyday usage, distance may refer to a physical length or an estimation based on other criteria. Since spatial cognition is a rich source of conceptual metaphors in human thought, the term is also frequently used metaphorically to mean a measurement of the amount of difference between two similar objects or a degree of separation. Most such notions of distance, both physical and metaphorical, are formalized in mathematics using the notion of a metric space.

A board showing distances near Visakhapatnam, India

Space

Videos

Page

Space is a three-dimensional continuum containing positions and directions. In classical physics, physical space is often conceived in three linear dimensions. Modern physicists usually consider it, with time, to be part of a boundless four-dimensional continuum known as spacetime. The concept of space is considered to be of fundamental importance to an understanding of the physical universe. However, disagreement continues between philosophers over whether it is itself an entity, a relationship between entities, or part of a conceptual framework.

Gottfried Leibniz

Isaac Newton

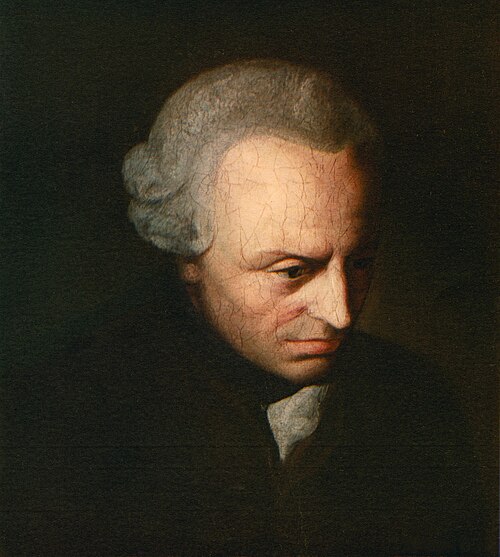

Immanuel Kant

Carl Friedrich Gauss