Fermat's principle, also known as the principle of least time, is the link between ray optics and wave optics. Fermat's principle states that the path taken by a ray between two given points is the path that can be traveled in the least time.

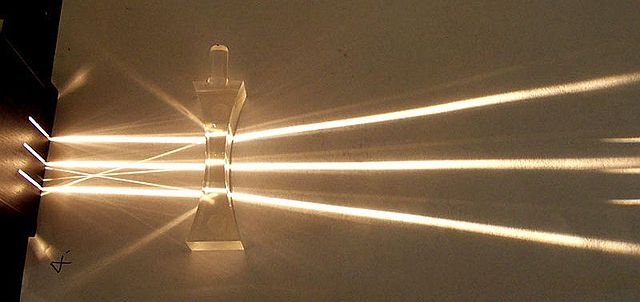

Fig. 3: An experiment demonstrating refraction (and partial reflection) of rays – approximated by, or contained in, narrow beams

Pierre de Fermat (1607 –1665)

Christiaan Huygens (1629–1695)

Pierre-Simon Laplace (1749–1827)

Pierre de Fermat was a French mathematician who is given credit for early developments that led to infinitesimal calculus, including his technique of adequality. In particular, he is recognized for his discovery of an original method of finding the greatest and the smallest ordinates of curved lines, which is analogous to that of differential calculus, then unknown, and his research into number theory. He made notable contributions to analytic geometry, probability, and optics. He is best known for his Fermat's principle for light propagation and his Fermat's Last Theorem in number theory, which he described in a note at the margin of a copy of Diophantus' Arithmetica. He was also a lawyer at the Parlement of Toulouse, France.

Pierre de Fermat, 17th century painting by unknown author

Pierre de Fermat, 17th century painting by Rolland Lefebvre [fr]

The 1670 edition of Diophantus's Arithmetica includes Fermat's commentary, referred to as his "Last Theorem" (Observatio Domini Petri de Fermat), posthumously published by his son

Place of burial of Pierre de Fermat in Place Jean Jaurés, Castres. Translation of the plaque: in this place was buried on January 13, 1665, Pierre de Fermat, councillor at the Chambre de l'Édit (a court established by the Edict of Nantes) and mathematician of great renown, celebrated for his theorem, an + bn ≠ cn for n>2

![Pierre de Fermat, 17th century painting by Rolland Lefebvre [fr]](https://upload.wikimedia.org/wikipedia/commons/thumb/3/3b/Pierre_de_Fermat3.jpg/485px-Pierre_de_Fermat3.jpg)