Half-life is the time required for a quantity to reduce to half of its initial value. The term is commonly used in nuclear physics to describe how quickly unstable atoms undergo radioactive decay or how long stable atoms survive. The term is also used more generally to characterize any type of exponential decay. For example, the medical sciences refer to the biological half-life of drugs and other chemicals in the human body. The converse of half-life is doubling time.

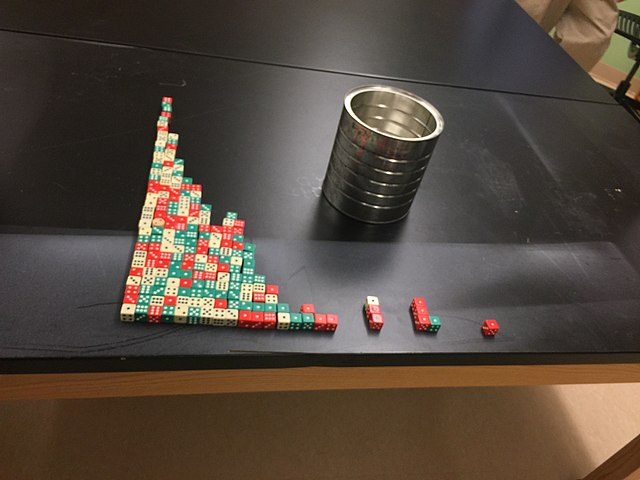

Half-life demonstrated using dice in a classroom experiment

Atoms are the basic particles of the chemical elements. An atom consists of a nucleus of protons and generally neutrons, surrounded by an electromagnetically bound swarm of electrons. The chemical elements are distinguished from each other by the number of protons that are in their atoms. For example, any atom that contains 11 protons is sodium, and any atom that contains 29 protons is copper. Atoms with the same number of protons but a different number of neutrons are called isotopes of the same element.

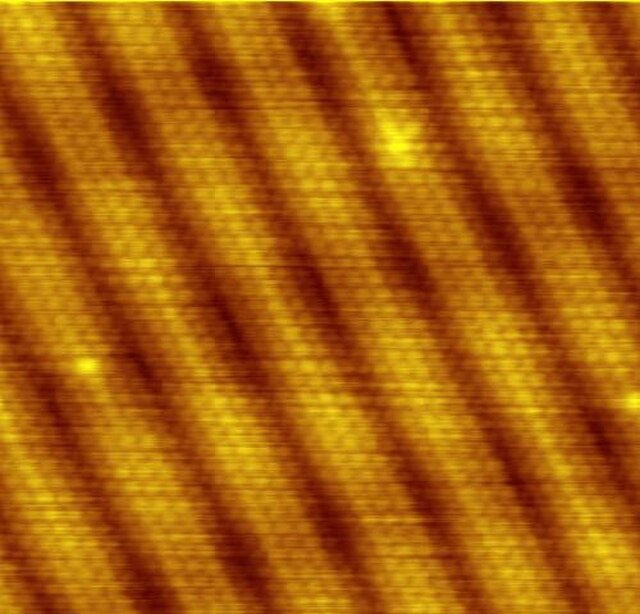

Scanning tunneling microscope image showing the individual atoms making up this gold (100) surface. The surface atoms deviate from the bulk crystal structure and arrange in columns several atoms wide with pits between them (See surface reconstruction).