The IBM 701 Electronic Data Processing Machine, known as the Defense Calculator while in development, was IBM’s first commercial scientific computer and its first series production mainframe computer, which was announced to the public on May 21, 1952. It was designed and developed by Jerrier Haddad and Nathaniel Rochester and was based on the IAS machine at Princeton.

IBM 701 operator's console

IBM 701 processor frame, showing 1071 of the vacuum tubes

Vacuum tube logic module from a 700 series IBM computer.

Williams tube from an IBM 701 at the Computer History Museum

International Business Machines Corporation, nicknamed Big Blue, is an American multinational technology company headquartered in Armonk, New York and present in over 175 countries. IBM is the largest industrial research organization in the world, with 19 research facilities across a dozen countries, having held the record for most annual U.S. patents generated by a business for 29 consecutive years from 1993 to 2021.

NACA researchers using an IBM type 704 electronic data processing machine in 1957

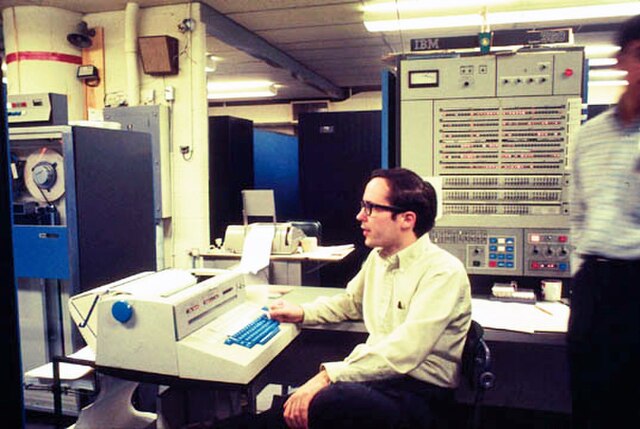

An IBM System/360 in use at the University of Michigan c. 1969

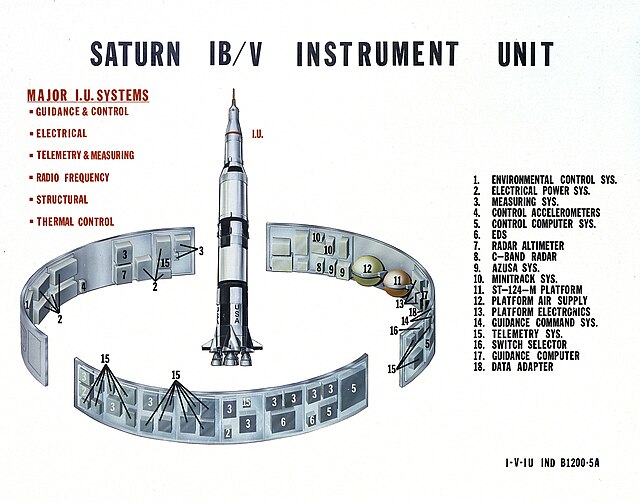

IBM guidance computer hardware for the Saturn V Instrument Unit

IBM CHQ in Armonk, New York in 2014