A motherboard is the main printed circuit board (PCB) in general-purpose computers and other expandable systems. It holds and allows communication between many of the crucial electronic components of a system, such as the central processing unit (CPU) and memory, and provides connectors for other peripherals. Unlike a backplane, a motherboard usually contains significant sub-systems, such as the central processor, the chipset's input/output and memory controllers, interface connectors, and other components integrated for general use.

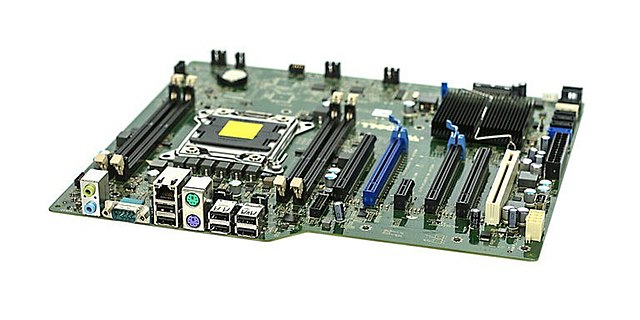

Dell Precision T3600 System Motherboard, used in professional CAD Workstations. Manufactured in 2012

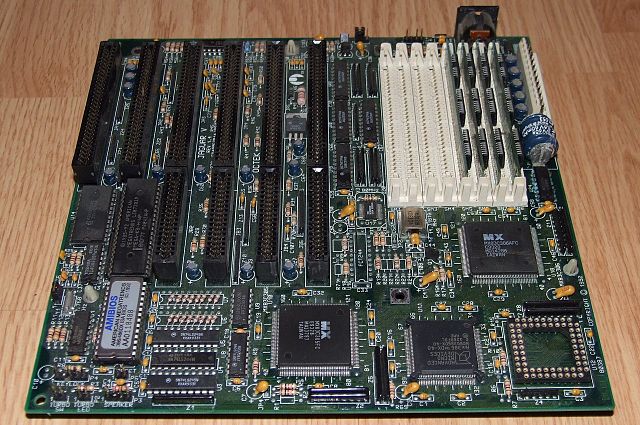

Motherboard for a personal desktop computer; showing the typical components and interfaces which are found on a motherboard. This model follows the Baby AT (form factor), used in many desktop PCs.

Mainboard of a NeXTcube computer (1990) with microprocessor Motorola 68040 operated at 25 MHz and a digital signal processor Motorola 56001 at 25 MHz, which was directly accessible via a connector on the back of the casing

The Octek Jaguar V motherboard from 1993. This board has few onboard peripherals, as evidenced by the 6 slots provided for ISA cards and the lack of other built-in external interface connectors. Note the large AT keyboard connector at the back right is its only peripheral interface.

Apple Inc. is an American multinational corporation and technology company headquartered in Cupertino, California, in Silicon Valley. It designs, develops, and sells consumer electronics, computer software, and online services. Devices include the iPhone, iPad, Mac, Apple Watch, Vision Pro, and Apple TV; operating systems include iOS, iPadOS, and macOS; and software applications and services include iTunes, iCloud, Apple Music, and Apple TV+.

Apple Park is the company's headquarters in Cupertino, California, in Silicon Valley.

In 1976, Steve Jobs and Steve Wozniak co-founded Apple in Jobs's parents' home on Crist Drive in Los Altos, California. Wozniak called the popular belief that the company was founded in the garage "a bit of a myth", although they moved some operations to the garage when the bedroom became too crowded.

The Apple I is Apple's first product, designed by Wozniak and sold as an assembled circuit board without the required keyboard, monitor, power supply, and the optional case.

The Apple II Plus was introduced in 1979, designed primarily by Wozniak.