A motion detector is an electrical device that utilizes a sensor to detect nearby motion. Such a device is often integrated as a component of a system that automatically performs a task or alerts a user of motion in an area. They form a vital component of security, automated lighting control, home control, energy efficiency, and other useful systems.

A motion detector attached to an outdoor, automatic light.

A passive infrared detector mounted on circuit board (right), along with photoresistive detector for visible light (left). This is the type most commonly encountered in household motion sensing devices and is designed to turn on a light only when motion is detected and when the surrounding environment is sufficiently dark.

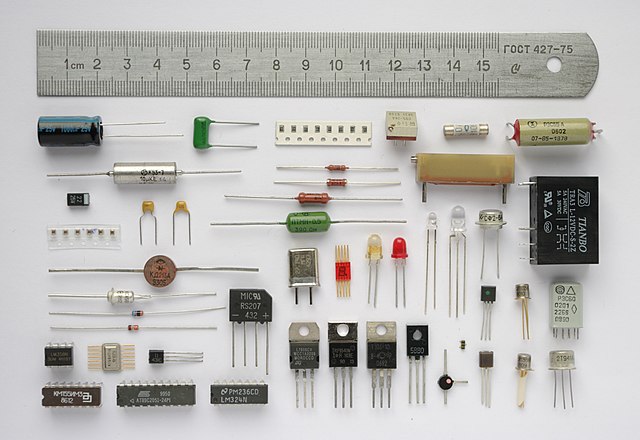

An electronic component is any basic discrete electronic device or physical entity part of an electronic system used to affect electrons or their associated fields. Electronic components are mostly industrial products, available in a singular form and are not to be confused with electrical elements, which are conceptual abstractions representing idealized electronic components and elements. A datasheet for an electronic component is a technical document that provides detailed information about the component's specifications, characteristics, and performance.

Various electronic components, with a 15 cm ruler to scale.

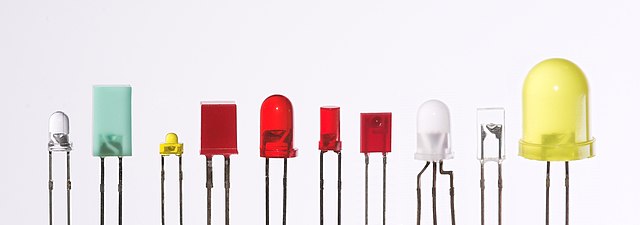

Various examples of Light-emitting diodes

SMD resistors on the backside of a PCB

Some different capacitors for electronic equipment