Numerical analysis is the study of algorithms that use numerical approximation for the problems of mathematical analysis. It is the study of numerical methods that attempt to find approximate solutions of problems rather than the exact ones. Numerical analysis finds application in all fields of engineering and the physical sciences, and in the 21st century also the life and social sciences, medicine, business and even the arts. Current growth in computing power has enabled the use of more complex numerical analysis, providing detailed and realistic mathematical models in science and engineering. Examples of numerical analysis include: ordinary differential equations as found in celestial mechanics, numerical linear algebra in data analysis, and stochastic differential equations and Markov chains for simulating living cells in medicine and biology.

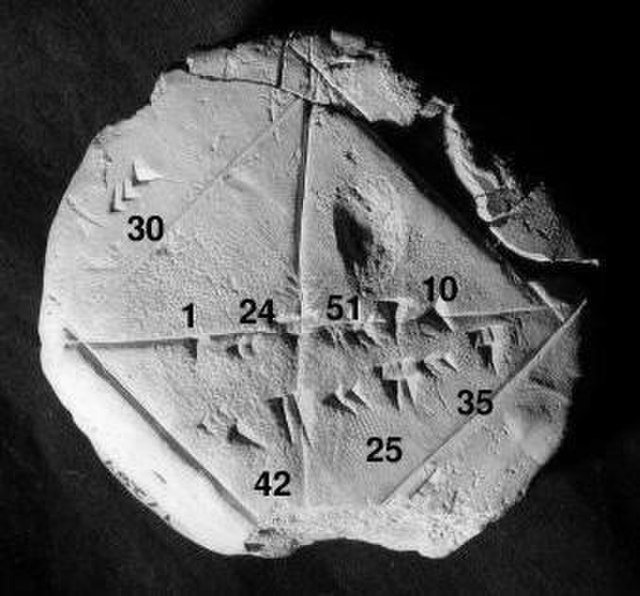

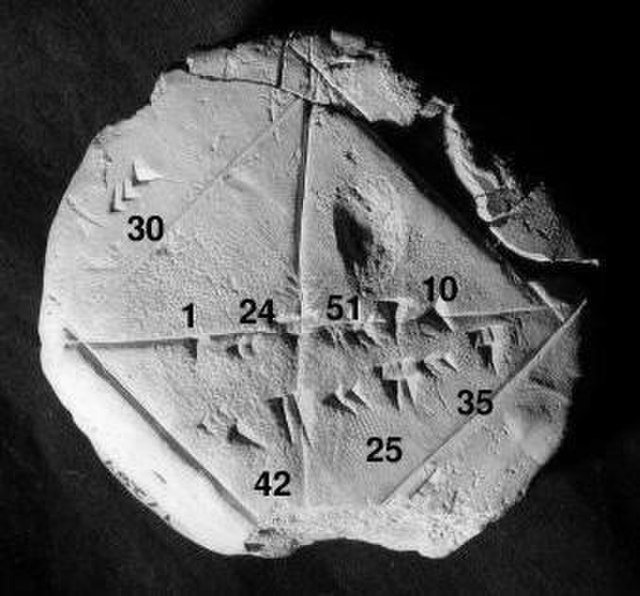

Babylonian clay tablet YBC 7289 (c. 1800–1600 BCE) with annotations. The approximation of the square root of 2 is four sexagesimal figures, which is about six decimal figures. 1 + 24/60 + 51/602 + 10/603 = 1.41421296...

NIST publication

How much for a glass of lemonade?

Sir Edmund Taylor Whittaker was a British mathematician, physicist, and historian of science. Whittaker was a leading mathematical scholar of the early 20th-century who contributed widely to applied mathematics and was renowned for his research in mathematical physics and numerical analysis, including the theory of special functions, along with his contributions to astronomy, celestial mechanics, the history of physics, and digital signal processing.

A 1933 portrait of Whittaker by Arthur Trevor Haddon titled Sir Edmund Taylor Whittaker

The 1913 Colloquium for the Edinburgh Mathematical Society. Whittaker is featured sitting at the far left end of the front row.

Front cover of the Whittaker Memorial Volume published in the Proceedings of the Edinburgh Mathematical Society in June 1958. The Proceedings is a delayed open-access journal, where the contents are free to read one year after publication.