Quantum

Videos

Photos

In physics, a quantum is the minimum amount of any physical entity involved in an interaction. Quantum is a discrete quantity of energy proportional in magnitude to the frequency of the radiation it represents. The fundamental notion that a property can be "quantized" is referred to as "the hypothesis of quantization". This means that the magnitude of the physical property can take on only discrete values consisting of integer multiples of one quantum. For example, a photon is a single quantum of light of a specific frequency. Similarly, the energy of an electron bound within an atom is quantized and can exist only in certain discrete values. Atoms and matter in general are stable because electrons can exist only at discrete energy levels within an atom. Quantization is one of the foundations of the much broader physics of quantum mechanics. Quantization of energy and its influence on how energy and matter interact is part of the fundamental framework for understanding and describing nature.

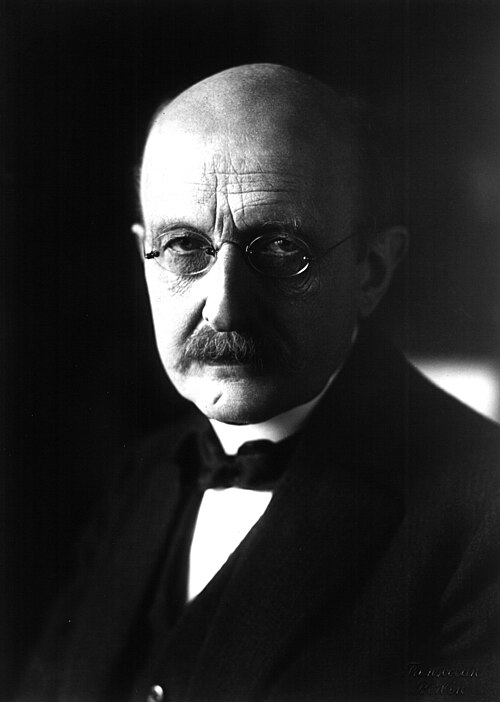

German Physicist and 1918 Nobel Prize for Physics recipient Max Planck (1858–1947)

Photon

Videos

Photos

A photon is an elementary particle that is a quantum of the electromagnetic field, including electromagnetic radiation such as light and radio waves, and the force carrier for the electromagnetic force. Photons are massless particles that always move at the speed of light when in vacuum. The photon belongs to the class of boson particles.

1926 Gilbert N. Lewis letter which brought the word "photon" into common usage