Randomization is a statistical process in which a random mechanism is employed to select a sample from a population or assign subjects to different groups. The process is crucial in ensuring the random allocation of experimental units or treatment protocols, thereby minimizing selection bias and enhancing the statistical validity. It facilitates the objective comparison of treatment effects in experimental design, as it equates groups statistically by balancing both known and unknown factors at the outset of the study. In statistical terms, it underpins the principle of probabilistic equivalence among groups, allowing for the unbiased estimation of treatment effects and the generalizability of conclusions drawn from sample data to the broader population.

Shuffling playing cards

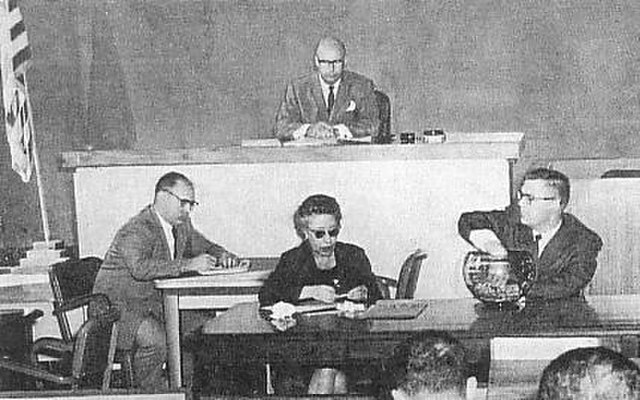

USCAR Court selecting a jury by sortition

Educational tools used to extract a random sample from a pool

A piece of text made using a cut-up technique

Shuffling is a procedure used to randomize a deck of playing cards to provide an element of chance in card games. Shuffling is often followed by a cut, to help ensure that the shuffler has not manipulated the outcome.

Overhand shuffle

Cards lifted after a riffle shuffle, forming what is called a bridge which puts the cards back into place

After a riffle shuffle, the cards cascade

Shuffling trick