In electronics, a sample and hold circuit is an analog device that samples the voltage of a continuously varying analog signal and holds its value at a constant level for a specified minimum period of time. Sample and hold circuits and related peak detectors are the elementary analog memory devices. They are typically used in analog-to-digital converters to eliminate variations in input signal that can corrupt the conversion process. They are also used in electronic music, for instance to impart a random quality to successively-played notes.

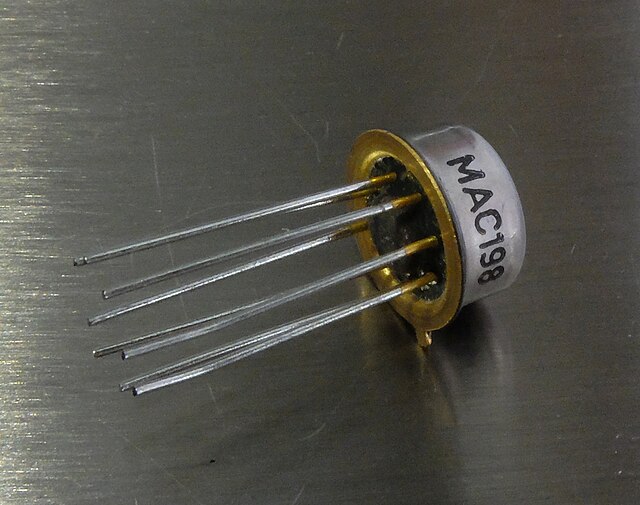

A sample-and-hold integrated circuit (Tesla MAC198)

The sample and hold stencil on a Korg ARP Odyssey synthesizer.

Analog-to-digital converter

In electronics, an analog-to-digital converter is a system that converts an analog signal, such as a sound picked up by a microphone or light entering a digital camera, into a digital signal. An ADC may also provide an isolated measurement such as an electronic device that converts an analog input voltage or current to a digital number representing the magnitude of the voltage or current. Typically the digital output is a two's complement binary number that is proportional to the input, but there are other possibilities.

4-channel stereo multiplexed analog-to-digital converter WM8775SEDS made by Wolfson Microelectronics placed on an X-Fi Fatal1ty Pro sound card

AD570 8-bit successive-approximation analog-to-digital converter

INTERSIL ICL7107. 3.5 digit (i.e. conversion from analog to a numeric range of 0 to 1999 vs. 3 digit range of 0 to 999, typically used in meters, counters, etc.) single-chip A/D converter

ICL7107 silicon die