A server is a computer that provides information to other computers called "clients" on computer network. This architecture is called the client–server model. Servers can provide various functionalities, often called "services", such as sharing data or resources among multiple clients or performing computations for a client. A single server can serve multiple clients, and a single client can use multiple servers. A client process may run on the same device or may connect over a network to a server on a different device. Typical servers are database servers, file servers, mail servers, print servers, web servers, game servers, and application servers.

Wikimedia Foundation rackmount servers on racks in a data center

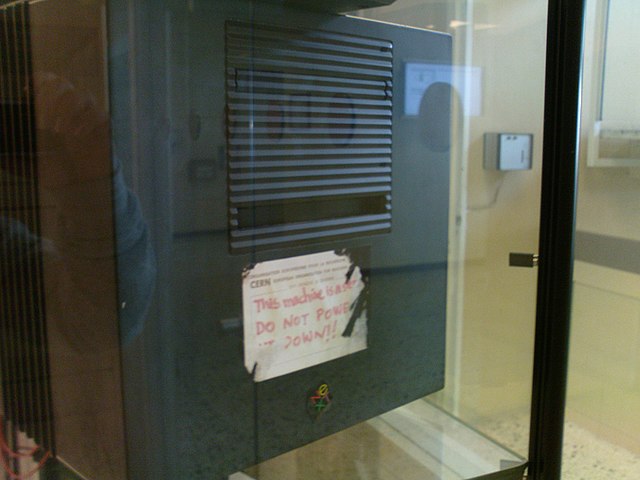

The first WWW server is located at CERN with its original sticker that says: "This machine is a server. DO NOT POWER IT DOWN!!"

A rack-mountable server with the top cover removed to reveal internal components

A server rack seen from the rear

Client is a computer that gets information from another computer called server in the context of client–server model of computer networks. The server is often on another computer system, in which case the client accesses the service by way of a network.

A thin client computer