A coprocessor is a computer processor used to supplement the functions of the primary processor. Operations performed by the coprocessor may be floating-point arithmetic, graphics, signal processing, string processing, cryptography or I/O interfacing with peripheral devices. By offloading processor-intensive tasks from the main processor, coprocessors can accelerate system performance. Coprocessors allow a line of computers to be customized, so that customers who do not need the extra performance do not need to pay for it.

AM9511-1 arithmetic coprocessor

Intel 80386DX CPU with 80387DX math coprocessor

A central processing unit (CPU), also called a central processor, main processor, or just processor, is the most important processor in a given computer. Its electronic circuitry executes instructions of a computer program, such as arithmetic, logic, controlling, and input/output (I/O) operations. This role contrasts with that of external components, such as main memory and I/O circuitry, and specialized coprocessors such as graphics processing units (GPUs).

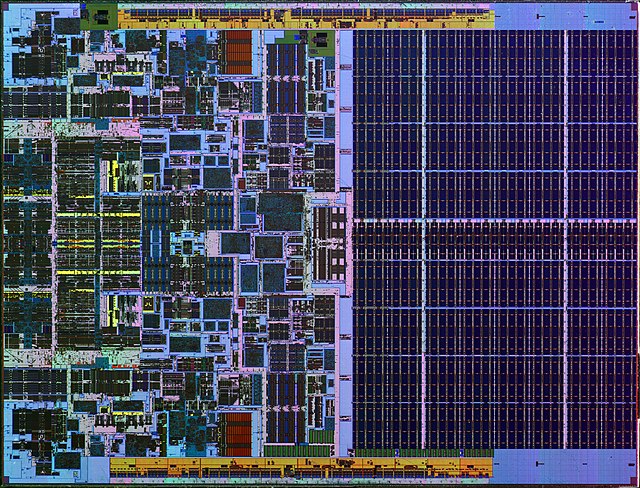

Inside a central processing unit: The integrated circuit of Intel's Xeon 3060, first manufactured in 2006

EDVAC, one of the first stored-program computers

IBM PowerPC 604e processor

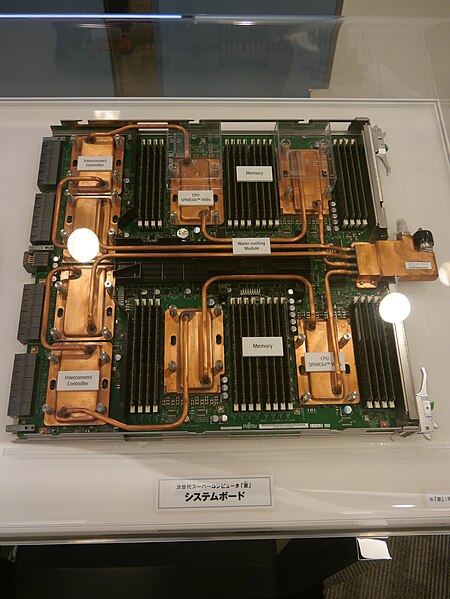

Fujitsu board with SPARC64 VIIIfx processors