A live CD is a complete bootable computer installation including operating system which runs directly from a CD-ROM or similar storage device into a computer's memory, rather than loading from a hard disk drive. A live CD allows users to run an operating system for any purpose without installing it or making any changes to the computer's configuration. Live CDs can run on a computer without secondary storage, such as a hard disk drive, or with a corrupted hard disk drive or file system, allowing data recovery.

CD-ROM of the LGX Yggdrasil Linux distribution release "Fall 1993"

Virtual OpenBSD machine configuration in VirtualBox with live image file (6.3-Release-i386-bootonly.iso)

In computing, booting is the process of starting a computer as initiated via hardware such as a button on the computer or by a software command. After it is switched on, a computer's central processing unit (CPU) has no software in its main memory, so some process must load software into memory before it can be executed. This may be done by hardware or firmware in the CPU, or by a separate processor in the computer system.

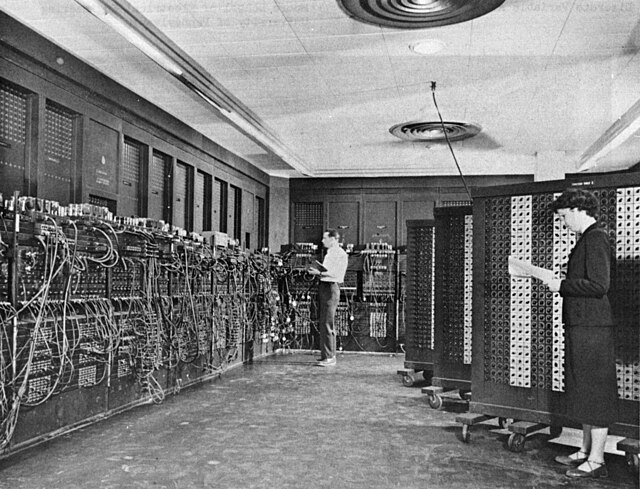

Switches and cables used to program ENIAC (1946)

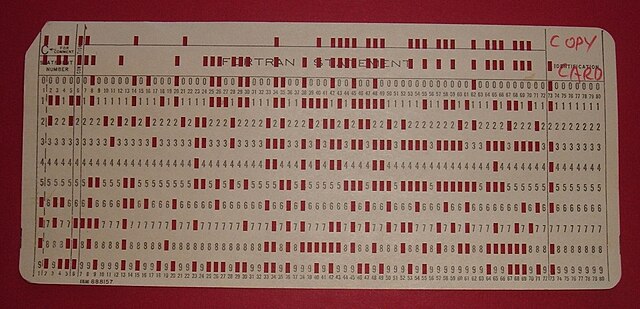

Initial program load punched card for the IBM 1130 (1965)

IBM System/3 console from the 1970s. Program load selector switch is lower left; Program load switch is lower right.

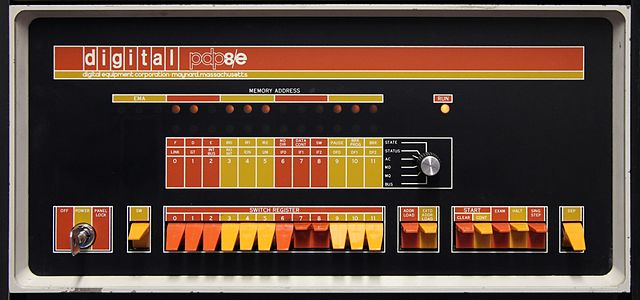

PDP-8/E front panel showing the switches used to load the bootstrap program