Photographic plates preceded photographic film as a capture medium in photography. The light-sensitive emulsion of silver salts was coated on a glass plate, typically thinner than common window glass. They were heavily used in the late 19th century and declined through the 20th. They were still used in some communities until the late 20th century.

AGFA photographic plates, 1880

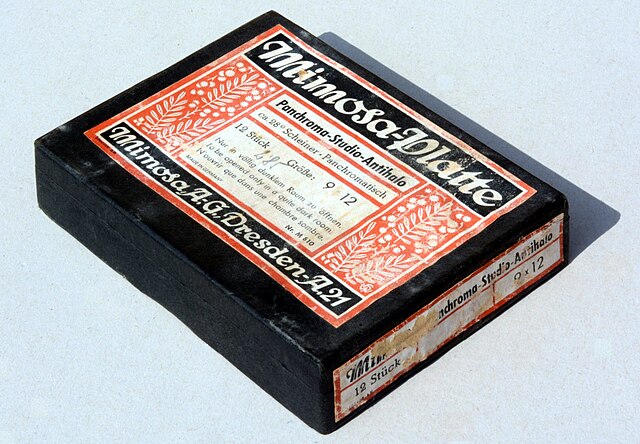

Mimosa Panchroma-Studio-Antihalo Panchromatic glass plates, 9 x 12cm, Mimosa A.-G. Dresden

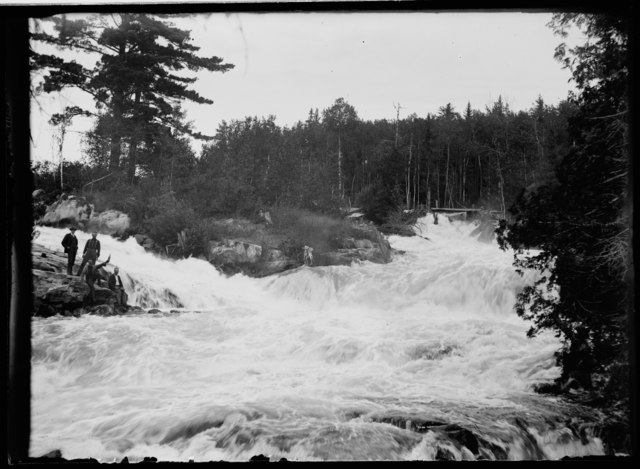

Negative plate

Image resulting from a glass plate negative showing Devil's Cascade in 1900.

Photographic film is a strip or sheet of transparent film base coated on one side with a gelatin emulsion containing microscopically small light-sensitive silver halide crystals. The sizes and other characteristics of the crystals determine the sensitivity, contrast, and resolution of the film. Film is typically segmented in frames, that give rise to separate photographs.

Undeveloped 35 mm film roll

A roll of 400 speed Kodak 35 mm film

A Polaroid instant photograph

135 Film Cartridge with DX barcode (top) and DX CAS code on the black and white grid below the barcode. The CAS code shows the ISO, number of exposures, exposure latitude (+3/−1 for print film).